<script type="application/ld+json">

{

"@context": "https://schema.org",

"@type": "FAQPage",

"mainEntity": [

{

"@type": "Question",

"name": "What is the velocity disconnect in software testing?",

"acceptedAnswer": {

"@type": "Answer",

"text": "The velocity disconnect refers to the gap between the rapid pace of AI-driven code generation and the comparatively slower speed of traditional manual testing processes."

}

},

{

"@type": "Question",

"name": "What is a QA Auditor?",

"acceptedAnswer": {

"@type": "Answer",

"text": "A QA Auditor is a role focused on defining testing intent, reviewing quality standards, and validating the outcomes of AI-generated tests rather than creating test scripts manually."

}

},

{

"@type": "Question",

"name": "Why is script-based testing becoming outdated?",

"acceptedAnswer": {

"@type": "Answer",

"text": "Script-based testing is becoming outdated because it cannot scale effectively with the speed, complexity, and volume of code produced by modern AI-driven development workflows."

}

}

]

}

</script>

.jpg)

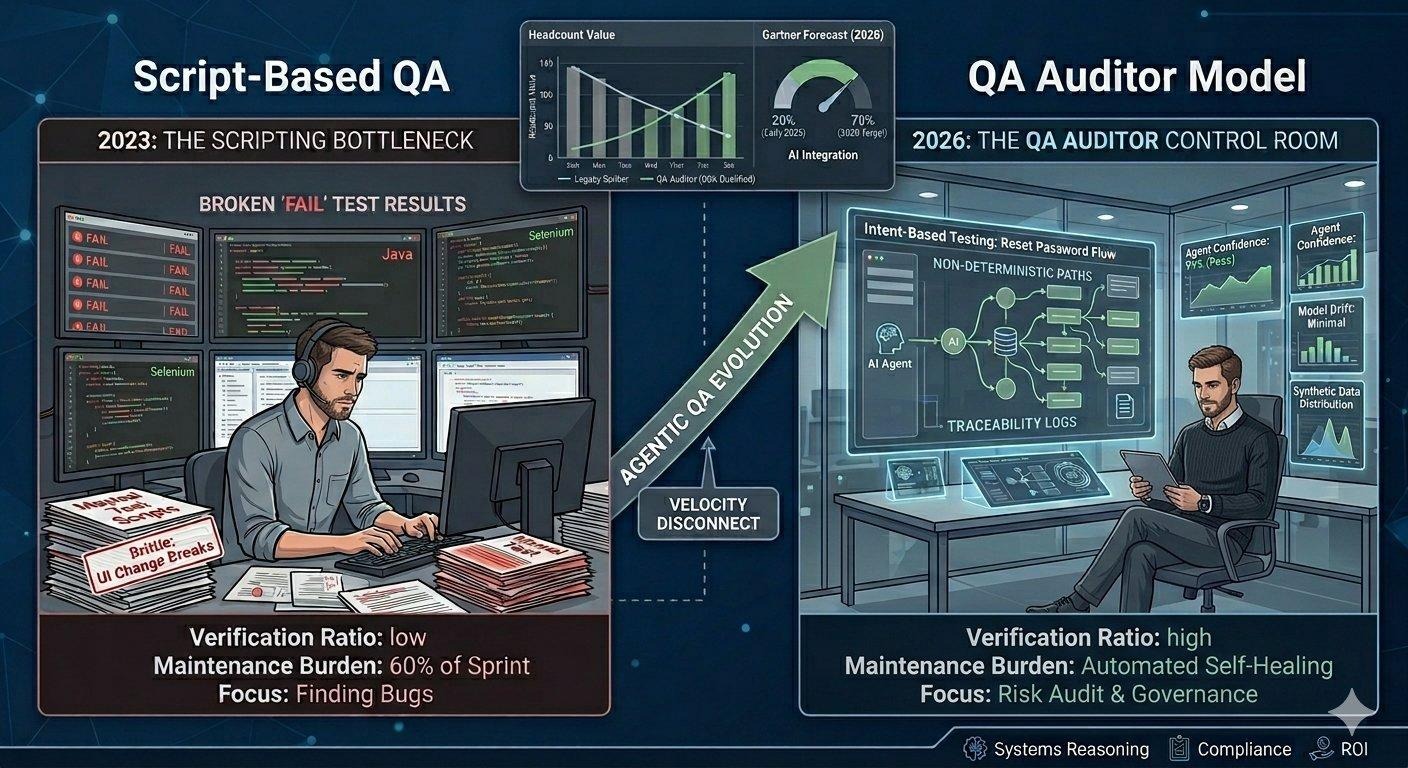

The primary bottleneck in modern software delivery is no longer code production. Since the widespread adoption of AI-augmented development, the volume of pull requests has scaled exponentially. The verification layer, though, has barely moved. For most teams it's still a human writing Playwright scripts against a codebase an AI generated in minutes.

That's the Velocity Disconnect. And it's why a growing number of engineering organisations are retiring the traditional Tester job function and replacing it with something more precise: the QA Auditor.

Traditional test automation has always operated on a simple ratio: more features mean more scripts. For most of the 2010s, that ratio was manageable. Teams scaled headcount, frameworks matured, and CI pipelines kept the feedback loop tight.

AI-generated code broke the ratio entirely. When a developer using an AI coding tool can produce a working feature in 20 minutes that would have taken three hours manually, the test authorship pipeline doesn't just fall behind — it structurally can't catch up.

The numbers show this shift already underway. According to the State of Testing 2024 report, Selenium usage dropped from 78% to 56% between 2023 and 2025 — the sharpest two-year decline the tool has seen since its peak. Teams aren't abandoning automation. They're abandoning the script-first model that made Selenium the default.

The maintenance tax on legacy scripts is the silent killer. Teams spend more sprint time keeping old tests green than writing new ones.

Meanwhile, 68% of organisations are already using generative AI to improve quality engineering, per the World Quality Report 2024. They're not supplementing their script libraries — they're rethinking what a test even is.

The old model: a tester receives a feature, writes a script to exercise it, runs the script, reports failures. The tester's value was in their execution speed and ability to find the edge case.

The Auditor model flips this. Instead of writing the test, the Auditor defines the Quality Objective — the business outcome that must hold — and hands it to an autonomous testing agent. The agent figures out how to verify it. The Auditor reviews whether the agent's reasoning was sound

This isn't abstract. In October 2025, Gartner published its inaugural Magic Quadrant for AI-Augmented Software Testing Tools — the first time the analyst firm created this category at all. The tools are described as context-aware, data-driven, and increasingly autonomous platforms. That a Magic Quadrant now exists for this is the clearest possible market signal: agentic testing has crossed from experiment into enterprise procurement.

The agentic QA evolution — from scripting bottleneck to Auditor control room

Here's what makes the Auditor role genuinely difficult, and why it can't be handed to a junior hire: the testing tool itself is now non-deterministic.

When you ask an autonomous agent to verify a Reset Password flow, it might find five different valid paths through the UI. All five return a Pass. But a human Auditor reviewing the Traceability Log needs to ask harder questions: Did any of those paths reflect how a real user navigates? Did the agent shortcut through a validation step that a malicious actor could also exploit? Is the agent's model of the UI still accurate, or has it begun to drift as the app evolves?

This is what Agent Governance looks like in practice: checking Path Validity (did the agent take a route no real user could?), monitoring Model Drift (is the agent's UI understanding still current?), and enforcing Confidence Thresholds (a low-confidence Pass is not a Pass).

Gartner's December 2025 report Predicts 2026: AI Agents Will Transform IT Infrastructure and Operations states explicitly that as autonomy increases, organisations will require stronger operational control and governance to ensure safety, compliance, and predictable outcomes. The pattern is the same whether the agent is deploying infrastructure or executing tests: more autonomy demands more rigorous human governance.

The market data is unusually directional for a technology this new.

A 3.5× increase in three years is not a gradual rollout. It's a platform shift — comparable to the move from waterfall to agile, or from manual to automated testing in the late 2000s. Teams that waited out those transitions didn't fall slightly behind; they required wholesale restructuring to catch up.

The job market is already repricing QA talent accordingly. Engineers with Playwright or Cypress expertise now earn 24% more than those primarily using Selenium. More tellingly, 32% of QA job listings now require candidates to pass DSA interviews — the same technical screen historically reserved for software engineers. Employers aren't looking for faster script-writers. They're looking for engineers who can reason about systems.

The good news for engineering leaders: the raw material for excellent QA Auditors already exists in most teams. Senior testers who have spent years building domain knowledge, understanding compliance requirements, and thinking about edge cases at a business level — not just a UI level — are exactly who this model needs.

What blocks the transition isn't capability. It's that those same senior engineers are buried in script maintenance, fighting to keep aging test suites green while the codebase evolves around them.

The practical starting point is a Governance Readiness Audit. Evaluate your QA team not on lines of automation code produced, but on three questions:

If your developers are complaining that QA can't keep up, it's almost certainly not a headcount problem. It's a methodology mismatch — a 2026 volume problem being addressed with a 2020 toolset.

The goal is to move your best quality engineers away from the keyboard and into the Control Room. The machine does the execution. The human provides the judgment.

At a glance

BaseRock empowers teams to implement intent-based testing, autonomous agents, and risk-aware validation across modern DevOps pipelines.

See how to transform your QA strategy with BaseRock

Q1: What is the velocity disconnect in software testing?

It refers to the gap between rapid AI-driven code generation and slower manual testing processes.

Q2: What is a QA Auditor?

A QA Auditor defines testing intent and validates AI-generated test outcomes instead of writing scripts.

Q3: Why is script-based testing becoming outdated?

This approach cannot scale with the speed and volume of AI-generated code.

Source

All statistics are sourced from verifiable published research: State of Testing 2024; World Quality Report 2024 & 2025; Gartner Magic Quadrant for AI-Augmented Software Testing Tools (Herschmann et al., October 2025); Gartner Predicts 2026: AI Agents Will Transform IT Infrastructure and Operations (December 2025); job market analysis from prepare.sh, 2025. No statistics were fabricated or extrapolated beyond their sourced scope.

Flexible deployment - Self hosted or on BaseRock Cloud