For years, development operated on a simple assumption: Code is relatively stable.That assumption no longer holds. AI has fundamentally changed not just how code is written—but how it is rewritten, iterated, and replaced. And the data proves it.

Recent large-scale analysis shows:

We are no longer evolving code. We are regenerating it.

AI has increased development velocity—but also introduced:

We are writing more code than ever, but trusting it less than ever.

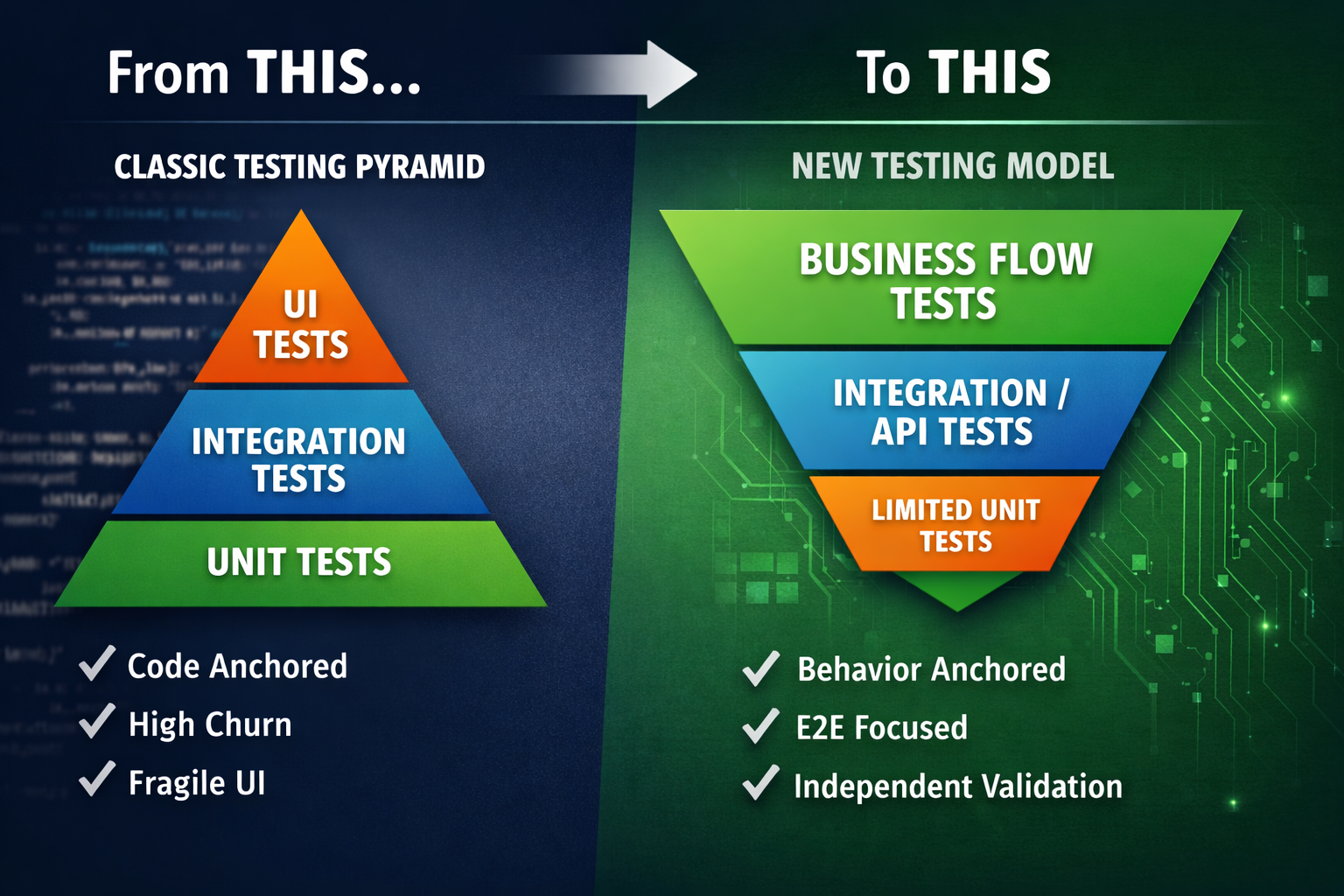

The traditional testing pyramid assumes:

.png)

With frequent changes, they become a constant source of breakage.

AI-generated code leads to:

Validation shifts from writing code to understanding and verifying it.

In the AI era: Correctness is no longer anchored to code. It is anchored to behavior.

What matters:

Stop testing functions. Start testing workflows:

These flows remain stable, even when code changes.

Characteristics:

Benefits:

Failures now happen at system boundaries:

Integration tests should be treated as first-class citizens.

Unit tests still matter, but their role changes.

Best suited for:

They should no longer be treated as the primary indicator of correctness.

Limit UI tests to:

Move most validation to:

This improves speed, reliability, and maintainability.

One of the biggest risks in AI-assisted development:

When the same AI generates both:

It can encode the same misunderstanding in both.

A fully passing test suite that validates incorrect behavior.

Replace the traditional pyramid with:

This model prioritizes confidence over coverage.

The core challenge has changed:

Writing correct code

Verifying correctness in constantly changing systems

This requires:

Code churn is no longer a warning signal, it is a structural property of AI-driven development. In a world where code is increasingly disposable,

business behavior becomes the only stable contract.

Flexible deployment - Self hosted or on BaseRock Cloud