.jpg)

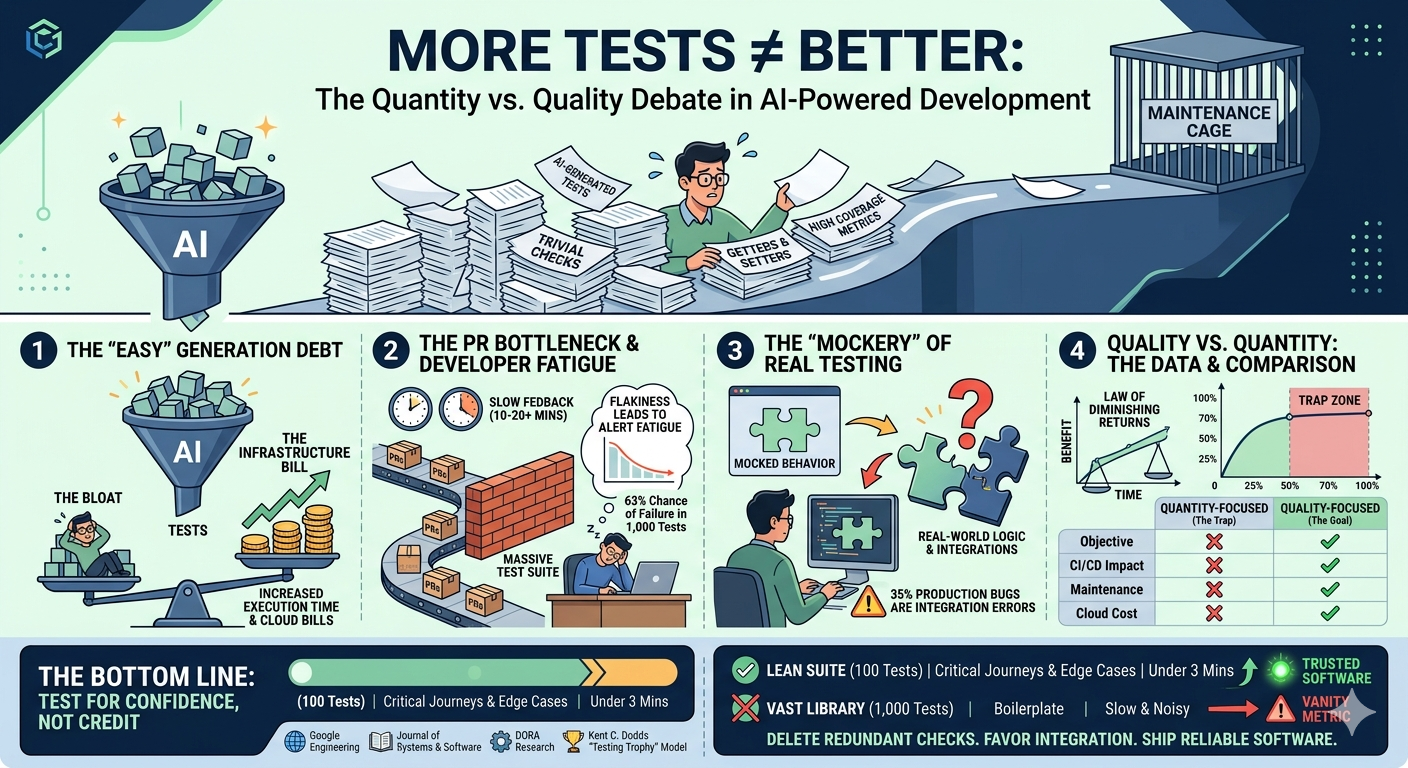

In the modern era of AI-powered development, writing tests has never been easier. With a single prompt or a "generate unit tests" click, you can sprout thousands of lines of test code in seconds. It feels productive. It looks great on a dashboard. But as many engineering teams are discovering, there is a point where the quantity of tests stops being a safety net and starts becoming a cage.

When we prioritize the volume of tests over their intent, we aren't building better software—we are building a maintenance nightmare.

The rise of generative AI means that code coverage targets that once took weeks to hit can now be reached in hours. However, this ease of creation is a double-edged sword.

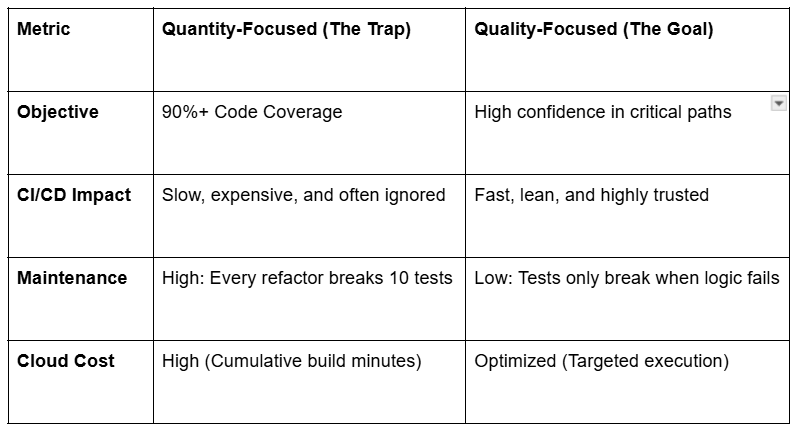

In fast-paced environments, productivity is often measured by the number of Pull Requests (PRs) merged. A massive test suite is the natural enemy of this velocity.

To keep large test suites running fast, developers often rely heavily on Mocks. If your test mocks the database, the external API, and the internal service logic, you aren't testing your code—you’re testing your assumptions.

The Risk: Industry post-mortems show that roughly 35% of production bugs are integration errors. If your tests rely on mocks that assume a behavior that has since changed in the real world, your tests will pass while your production environment crashes.

Research suggests that while moving from 0% to 70% coverage significantly reduces bugs, the benefits taper off sharply afterward. The "Law of Diminishing Returns" applies heavily to testing.

A lean suite of 100 tests that covers critical user journeys, handles messy edge cases, and runs in under three minutes is infinitely more valuable than a library of 1,000 tests that just confirms your boilerplate code works as intended.

Stop counting your tests and start weighing them. Delete redundant checks, favor integration over excessive mocking, and remember: The goal of testing is to ship reliable software, not to hit a vanity metric on a chart.

Flexible deployment - Self hosted or on BaseRock Cloud